|

This option defaults to False (disabled).Ĭol_sample_rate_change_per_level: This option specifies to change the column sampling rate as a function of the depth in the tree. If disabled, then the model will train regardless of the response column being a constant value or not. If enabled (default), then an exception is thrown if the response column is a constant value. Defaults to False.Ĭheck_constant_response: Check if the response column is a constant value. Calibration can provide more accurate estimates of class probabilities. Must be one of "auto", "platt_scaling", or "isotonic_regression".Ĭalibrate_model: Use Platt scaling to calculate calibrated class probabilities. This option defaults to False (disabled).Ĭalibration_frame: Specifies the frame to be used for Platt scaling.Ĭalibration_method: Calibration method to use. This is suitable for small datasets as there is no network overhead but fewer CPUs are used. The sorted list of these random numbers forms the histogram bin boundaries e.g. For example, to generate 4 bins for some feature ranging from 0-100, 3 random numbers would be generated in this range (13.2, 89.12, 45.0). The cut points are random rather than uniform. When this is specified, the algorithm will sample N-1 points from min…max and use the sorted list of those to find the best split. H2O supports extremely randomized trees (XRT) via histogram_type="Random".

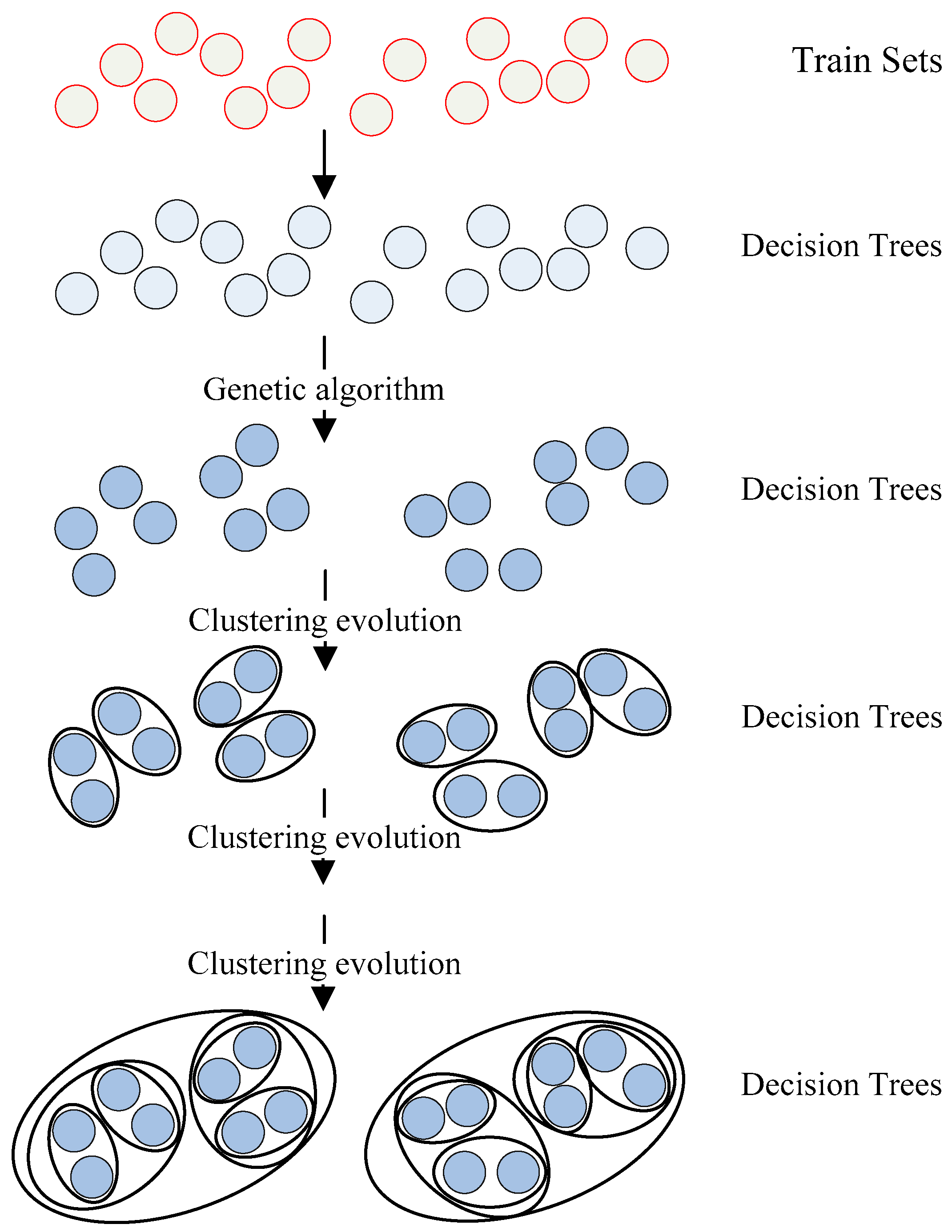

This usually allows to reduce the variance of the model a bit more, at the expense of a slightly greater increase in bias. As in random forests, a random subset of candidate features is used, but instead of looking for the most discriminative thresholds, thresholds are drawn at random for each candidate feature, and the best of these randomly generated thresholds is picked as the splitting rule. In extremely randomized trees (XRT), randomness goes one step further in the way that splits are computed. In random forests, a random subset of candidate features is used to determine the most discriminative thresholds that are picked as the splitting rule. There was some code cleanup and refactoring to support the following features:ĭRF no longer has a special-cased histogram for classification (class DBinomHistogram has been superseded by DRealHistogram) since it was not applicable to cases with observation weights or for cross-validation. For multiclass problems, a tree is used to estimate the probability of each class separately. Minor changes in histogramming logic for some corner casesīy default, DRF builds half as many trees for binomial problems, similar to GBM: it uses a single tree to estimate class 0 (probability “p0”), and then computes the probability of class 0 as \(1.0 - p0\). Improved ability to train on categorical variables (using the nbins_cats parameter) The current version of DRF is fundamentally the same as in previous versions of H2O (same algorithmic steps, same histogramming techniques), with the exception of the following changes: ‘dog’, ‘cat’, ‘mouse) in lexicographic order to a name lookup array with integer indices (e.g. (Note: For a categorical response column, DRF maps factors (e.g. Both classification and regression take the average prediction over all of their trees to make a final prediction, whether predicting for a class or numeric value. Each of these trees is a weak learner built on a subset of rows and columns. When given a set of data, DRF generates a forest of classification or regression trees, rather than a single classification or regression tree.

Saving, Loading, Downloading, and Uploading Modelsĭistributed Random Forest (DRF) is a powerful classification and regression tool.Distributed Uplift Random Forest (Uplift DRF).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed